AIGC Weekly | #66 AIGC 每周简报 | 第 66 期

AIGC Top Papers and AI news of the week

本周 AIGC 顶级论文和 AI 新闻

Top Papers of the week(APR 29 - MAY 5)

本周热门论文(4 月 29 日 - 5 月 5 日)

1.) Extending Llama-3's Context Ten-Fold Overnight ( paper | code )

1. ) 一夜之间将 Llama-3 的上下文扩展十倍( 论文 | 代码 )

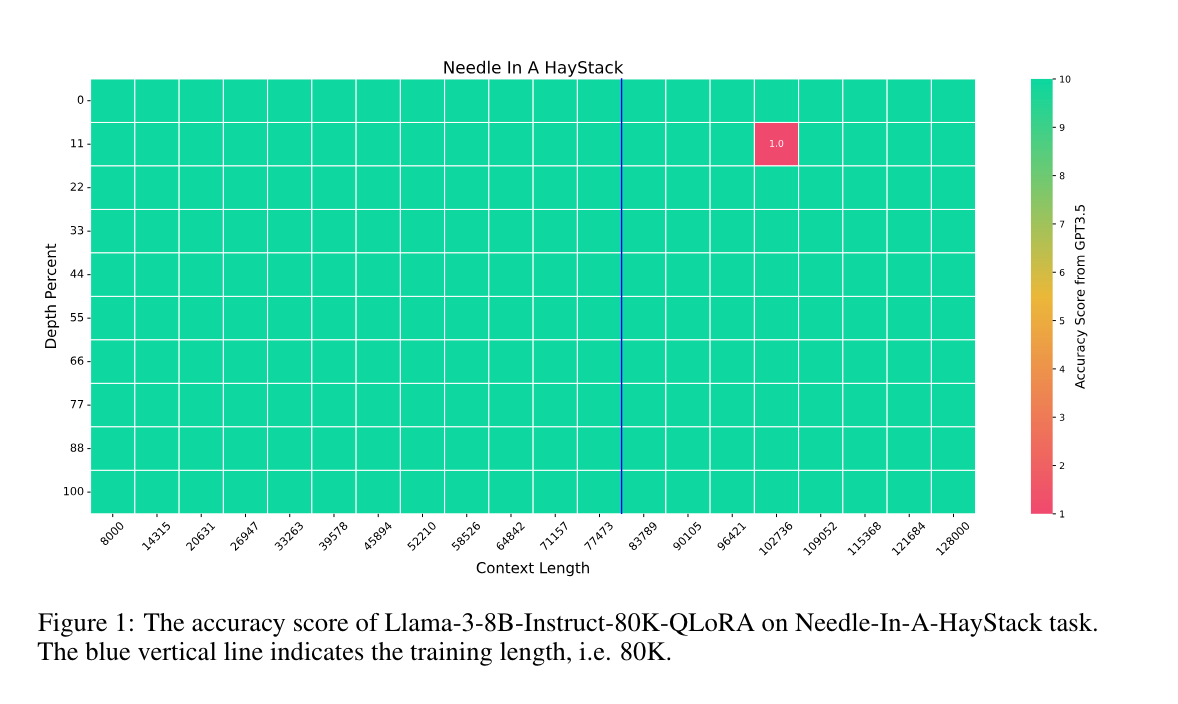

We extend the context length of Llama-3-8B-Instruct from 8K to 80K via QLoRA fine-tuning. The entire training cycle is super efficient, which takes 8 hours on one 8xA800 (80G) GPU machine. The resulted model exhibits superior performances across a broad range of evaluation tasks, such as NIHS, topic retrieval, and long-context language understanding; meanwhile, it also well preserves the original capability over short contexts.

我们通过 QLoRA 微调将 Llama-3-8B-Instruct 的上下文长度从 8K 扩展到 80K。整个训练周期非常高效,在一台 8xA800 (80G) GPU 机器上只需 8 小时。结果模型在广泛的评估任务中表现出色,例如 NIHS、主题检索和长上下文语言理解;同时,它也很好地保留了在短上下文中的原始能力。

2.) InstantFamily: Masked Attention for Zero-shot Multi-ID Image Generation ( paper )

2. ) InstantFamily: Masked Attention for Zero-shot Multi-ID Image Generation (paper)

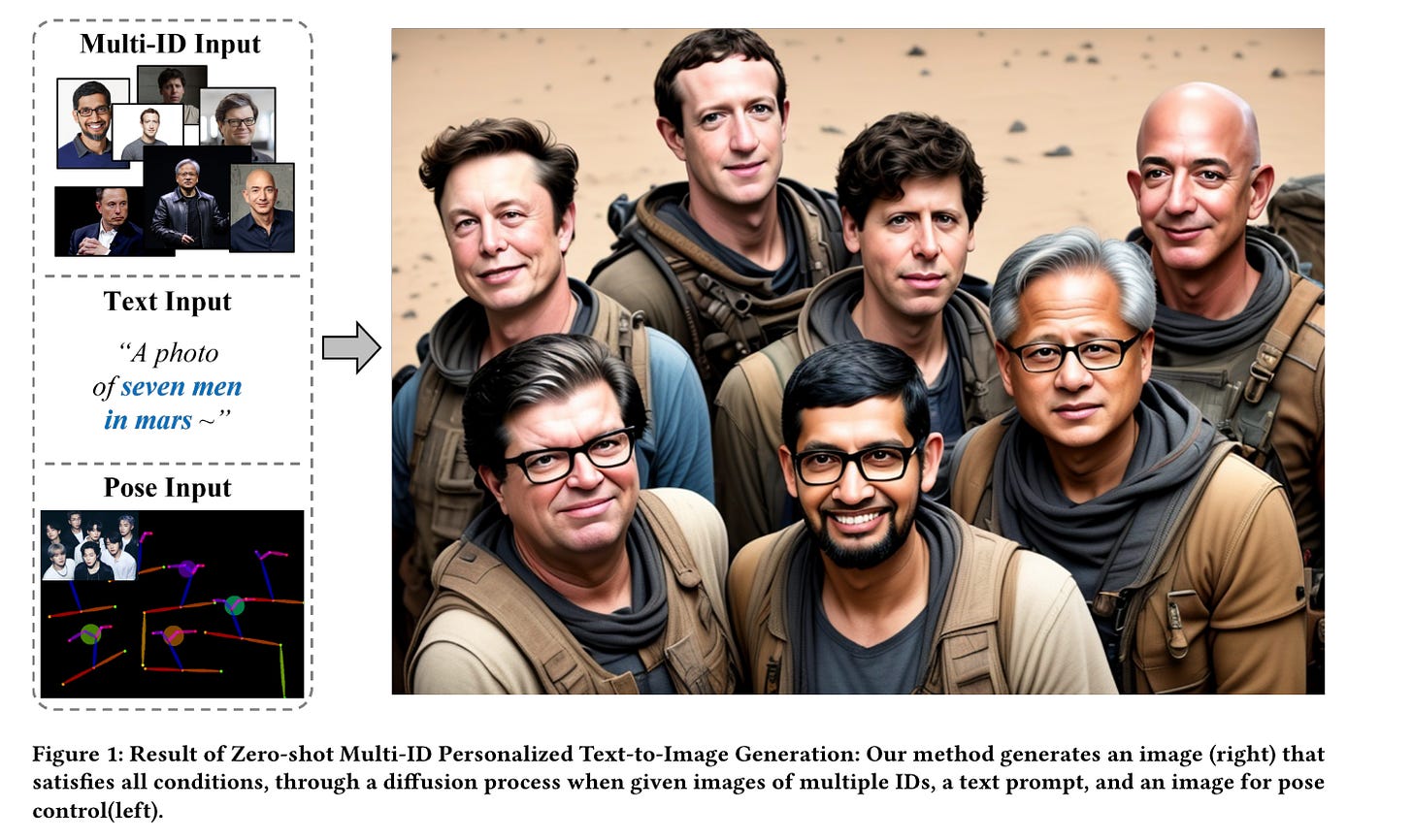

In the field of personalized image generation, the ability to create images preserving concepts has significantly improved. Creating an image that naturally integrates multiple concepts in a cohesive and visually appealing composition can indeed be challenging. This paper introduces "InstantFamily," an approach that employs a novel masked cross-attention mechanism and a multimodal embedding stack to achieve zero-shot multi-ID image generation. Our method effectively preserves ID as it utilizes global and local features from a pre-trained face recognition model integrated with text conditions.

在个性化图像生成领域,保持概念的图像生成能力显著提高。创建一个自然融合多个概念且具有连贯和视觉吸引力的图像确实具有挑战性。本文介绍了“InstantFamily”,这是一种采用新颖的掩码交叉注意力机制和多模态嵌入堆栈的方法,实现零样本多 ID 图像生成。我们的方法有效地保留了 ID,因为它利用了预训练人脸识别模型中的全局和局部特征,并结合了文本条件。

3.) Controllable Text Generation in the Instruction-Tuning Era ( paper )

3.)指令微调时代的可控文本生成(paper)

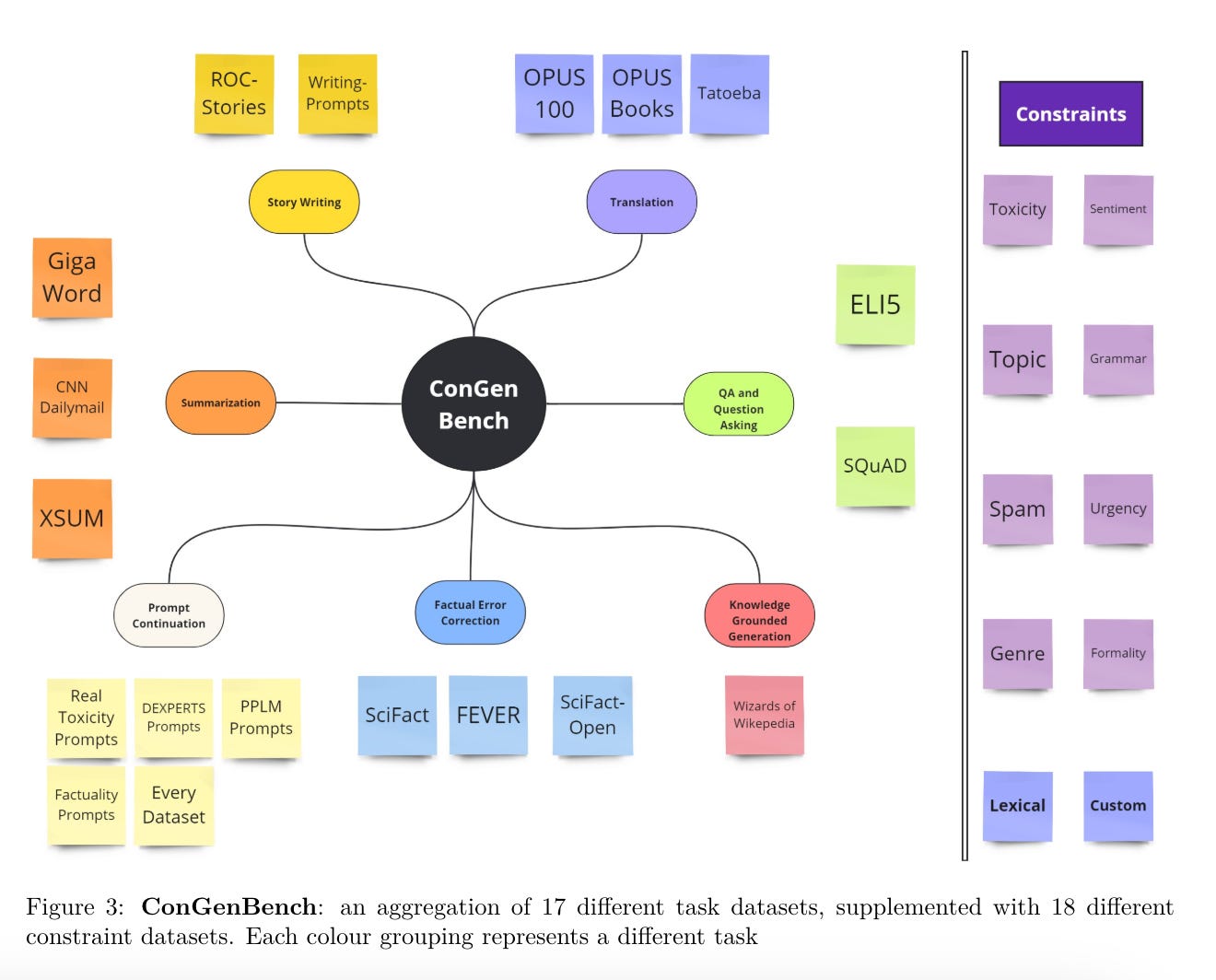

While most research on controllable text generation has focused on steering base Language Models, the emerging instruction-tuning and prompting paradigm offers an alternate approach to controllability. We compile and release ConGenBench, a testbed of 17 different controllable generation tasks, using a subset of it to benchmark the performance of 9 different baselines and methods on Instruction-tuned Language Models. To our surprise, we find that prompting-based approaches outperform controllable text generation methods on most datasets and tasks, highlighting a need for research on controllable text generation with Instruction-tuned Language Models in specific. Prompt-based approaches match human performance on most stylistic tasks while lagging on structural tasks, foregrounding a need to study more varied constraints and more challenging stylistic tasks.

虽然大多数关于可控文本生成的研究都集中在引导基础语言模型 (Language Models) 上,但新兴的指令微调 (Instruction-tuning) 和提示 (Prompting) 范式提供了一种可控性的替代方法。我们编译并发布了 ConGenBench,这是一个包含 17 个不同可控生成任务的测试平台,使用其中的一个子集来基准测试 9 种不同基线和方法在指令微调语言模型上的表现。令我们惊讶的是,我们发现基于提示的方法在大多数数据集和任务上优于可控文本生成方法,这突显了在特定的指令微调语言模型上进行可控文本生成研究的必要性。基于提示的方法在大多数风格任务上与人类表现相当,但在结构任务上有所滞后,这表明需要研究更多样化的约束和更具挑战性的风格任务。

4.) Error-Driven Uncertainty Aware Training ( paper )

4.)错误驱动的不确定性感知训练(paper)

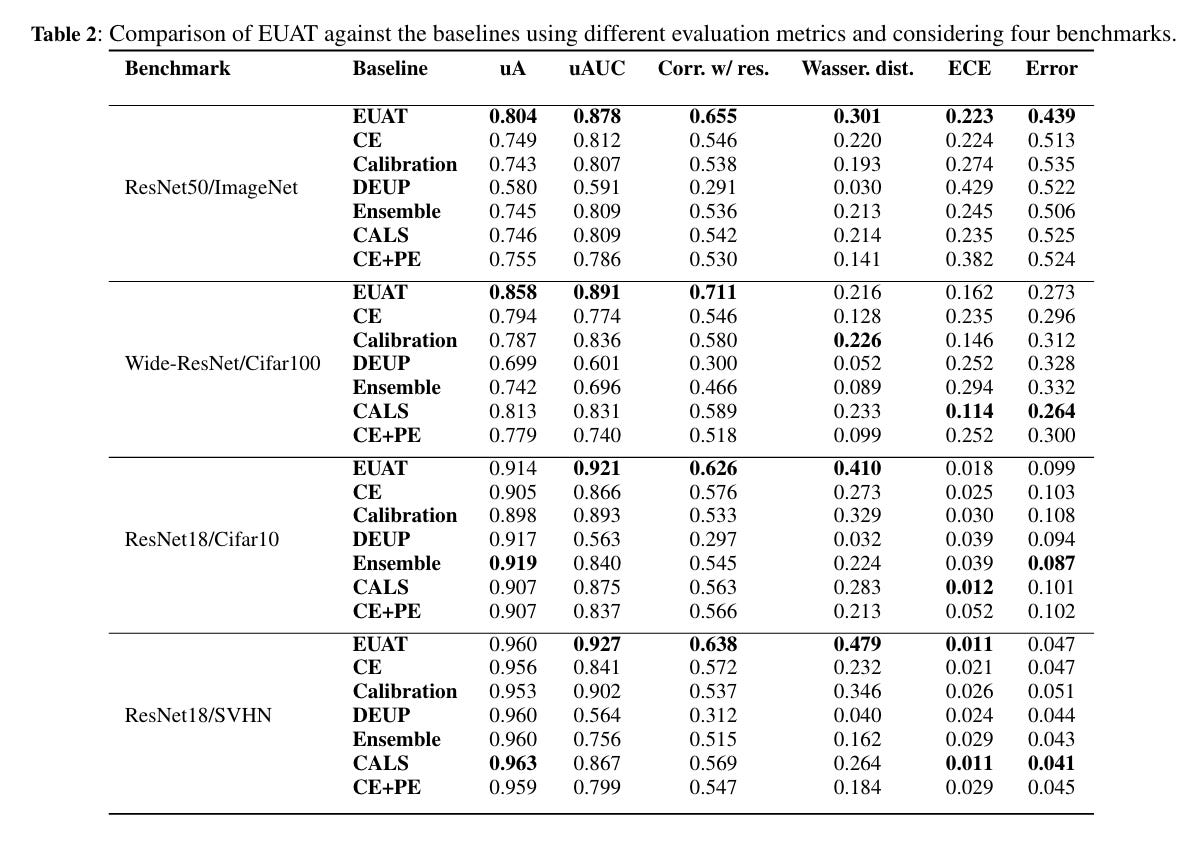

Neural networks are often overconfident about their predictions, which undermines their reliability and trustworthiness. In this work, we present a novel technique, named Error-Driven Uncertainty Aware Training (EUAT), which aims to enhance the ability of neural models to estimate their uncertainty correctly, namely to be highly uncertain when they output inaccurate predictions and low uncertain when their output is accurate. The EUAT approach operates during the model's training phase by selectively employing two loss functions depending on whether the training examples are correctly or incorrectly predicted by the model.

神经网络通常对其预测过于自信,这削弱了它们的可靠性和可信度。在这项工作中,我们提出了一种新技术,名为错误驱动的不确定性感知训练 (Error-Driven Uncertainty Aware Training, EUAT),旨在增强神经模型正确估计其不确定性的能力,即在输出不准确预测时高度不确定,而在输出准确预测时低度不确定。EUAT 方法在模型的训练阶段运行,通过根据训练样本是否被模型正确预测来选择性地使用两种损失函数。

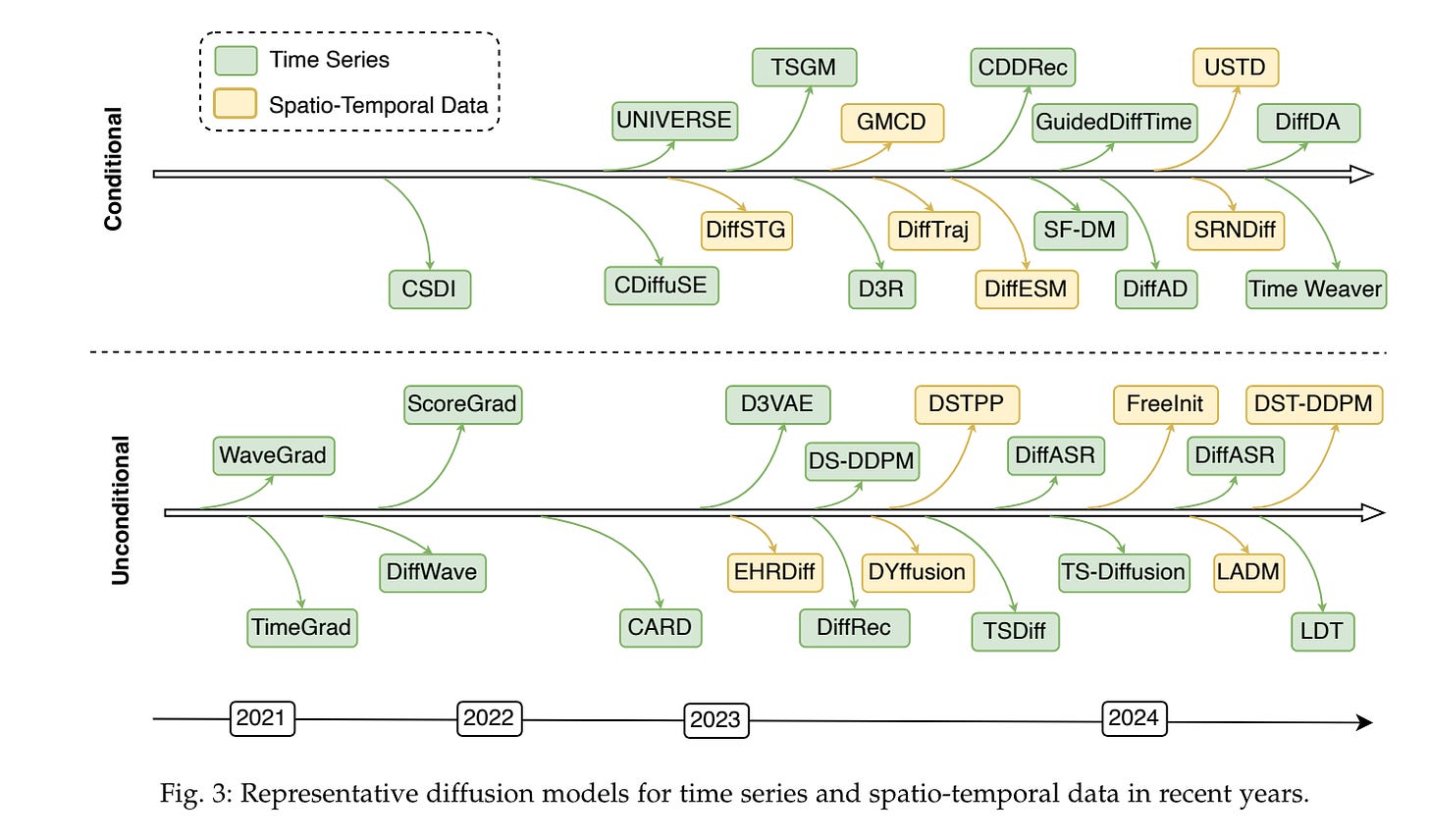

5.) A Survey on Diffusion Models for Time Series and Spatio-Temporal Data ( paper )

5. ) 时间序列和时空数据扩散模型综述 ( paper )

The study of time series data is crucial for understanding trends and anomalies over time, enabling predictive insights across various sectors. Spatio-temporal data, on the other hand, is vital for analyzing phenomena in both space and time, providing a dynamic perspective on complex system interactions. Recently, diffusion models have seen widespread application in time series and spatio-temporal data mining. Not only do they enhance the generative and inferential capabilities for sequential and temporal data, but they also extend to other downstream tasks. In this survey, we comprehensively and thoroughly review the use of diffusion models in time series and spatio-temporal data, categorizing them by model category, task type, data modality, and practical application domain.

6.) U-Nets as Belief Propagation: Efficient Classification, Denoising, and Diffusion in Generative Hierarchical Models ( paper )

6. ) U-Nets 作为信念传播:生成层次模型中的高效分类、去噪和扩散(论文)

U-Nets are among the most widely used architectures in computer vision, renowned for their exceptional performance in applications such as image segmentation, denoising, and diffusion modeling. However, a theoretical explanation of the U-Net architecture design has not yet been fully established.

U-Net 是计算机视觉中最广泛使用的架构之一,以其在图像分割、去噪和扩散建模等应用中的卓越表现而闻名。然而,U-Net 架构设计的理论解释尚未完全确立。

This paper introduces a novel interpretation of the U-Net architecture by studying certain generative hierarchical models, which are tree-structured graphical models extensively utilized in both language and image domains.

本文通过研究某些生成层次模型,介绍了对 U-Net 架构的一种新颖解释。这些生成层次模型是广泛应用于语言和图像领域的树状图形模型。

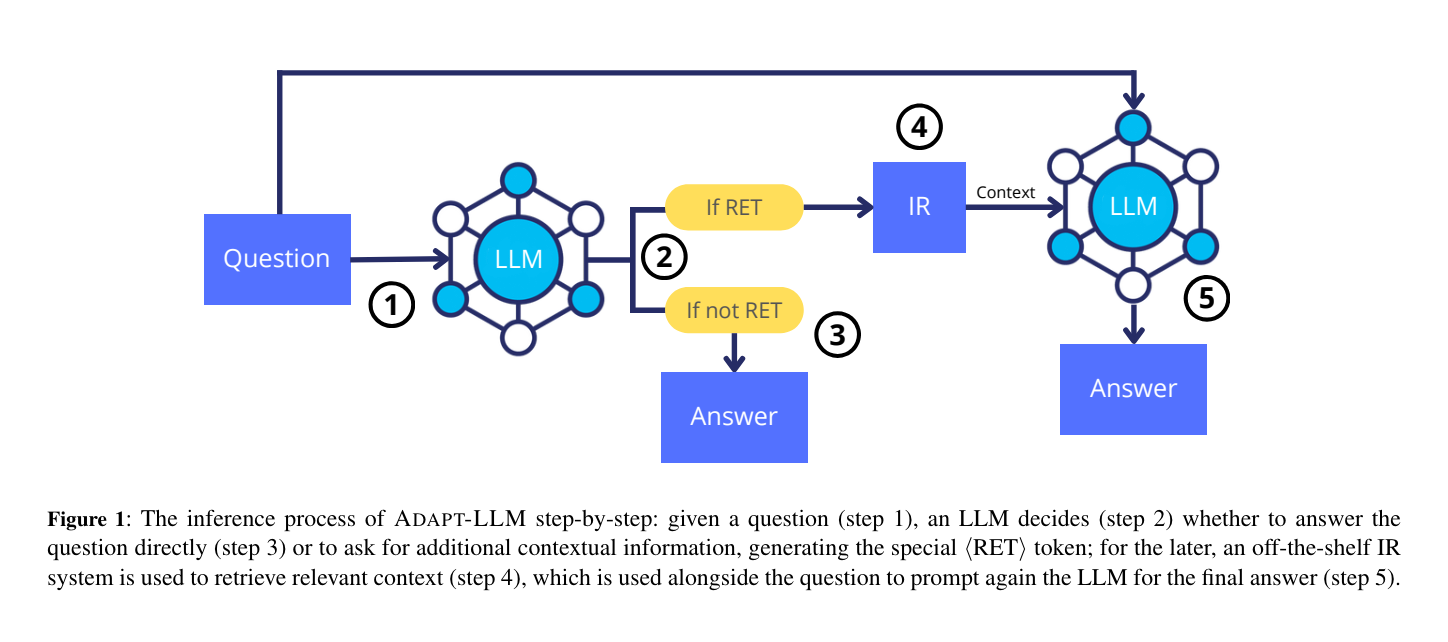

7.) When to Retrieve: Teaching LLMs to Utilize Information Retrieval Effectively ( paper )

7.)何时检索:教LLMs有效利用信息检索(论文)

In this paper, we demonstrate how Large Language Models (LLMs) can effectively learn to use an off-the-shelf information retrieval (IR) system specifically when additional context is required to answer a given question. Given the performance of IR systems, the optimal strategy for question answering does not always entail external information retrieval; rather, it often involves leveraging the parametric memory of the LLM itself. Prior research has identified this phenomenon in the PopQA dataset, wherein the most popular questions are effectively addressed using the LLM's parametric memory, while less popular ones require IR system usage. Following this, we propose a tailored training approach for LLMs, leveraging existing open-domain question answering datasets. Here, LLMs are trained to generate a special token, <RET>, when they do not know the answer to a question.

在本文中,我们展示了大语言模型 (LLM) 如何在需要额外上下文来回答给定问题时,能够有效地学习使用现成的信息检索 (IR) 系统。鉴于 IR 系统的性能,问题回答的最佳策略并不总是需要外部信息检索;相反,它通常涉及利用 LLM 本身的参数记忆。先前的研究在 PopQA 数据集中已经发现了这一现象,其中最受欢迎的问题可以通过 LLM 的参数记忆有效解决,而不太受欢迎的问题则需要使用 IR 系统。基于此,我们提出了一种针对 LLM 的定制训练方法,利用现有的开放域问答数据集。在这里,当 LLM 不知道问题的答案时,它们会被训练生成一个特殊的 Token,<RET>。

8.) StoryDiffusion: Consistent Self-Attention for Long-Range Image and Video Generation ( paper | code )

8.) StoryDiffusion: 长程图像和视频生成的一致性自注意力( 论文 | 代码 )

For recent diffusion-based generative models, maintaining consistent content across a series of generated images, especially those containing subjects and complex details, presents a significant challenge. In this paper, we propose a new way of self-attention calculation, termed Consistent Self-Attention, that significantly boosts the consistency between the generated images and augments prevalent pretrained diffusion-based text-to-image models in a zero-shot manner. To extend our method to long-range video generation, we further introduce a novel semantic space temporal motion prediction module, named Semantic Motion Predictor.

对于最近基于扩散的生成模型来说,保持一系列生成图像中内容的一致性,特别是那些包含主体和复杂细节的图像,仍然是一个重大挑战。在本文中,我们提出了一种新的自注意力计算方法,称为一致性自注意力 (Consistent Self-Attention),它显著提高了生成图像之间的一致性,并以零样本 (Zero-shot) 方式增强了流行的预训练基于扩散的文本生成图像模型。为了将我们的方法扩展到长距离视频生成,我们进一步引入了一种新的语义空间时间运动预测模块,称为语义运动预测器 (Semantic Motion Predictor)。

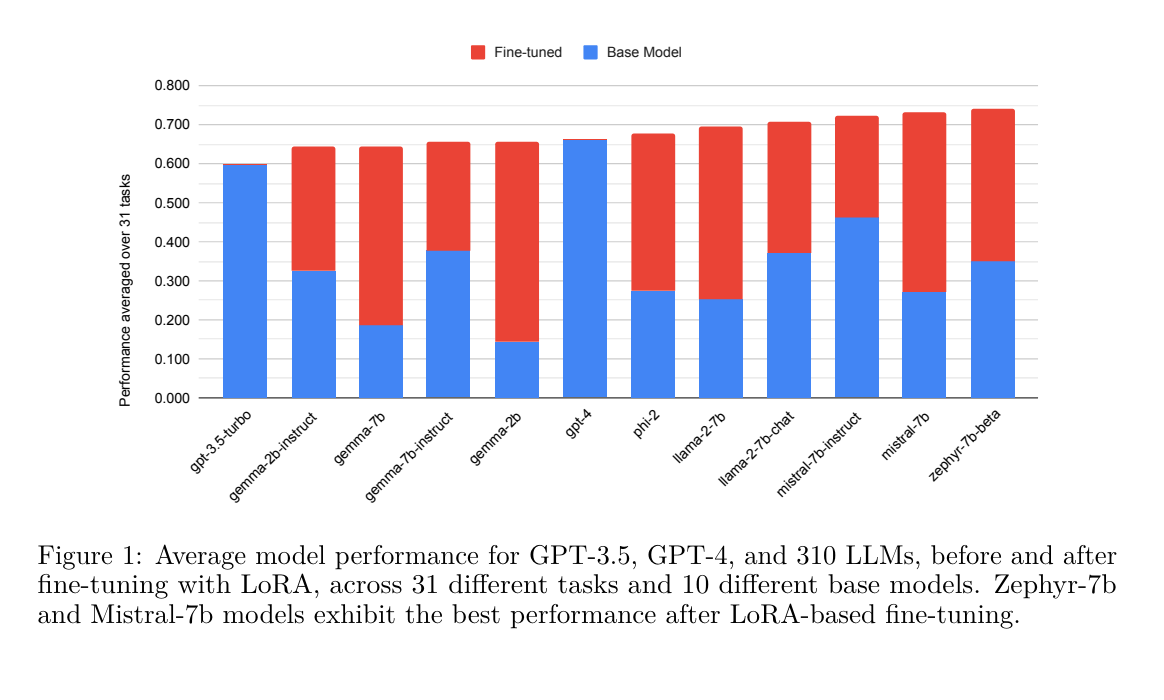

9.) LoRA Land: 310 Fine-tuned LLMs that Rival GPT-4, A Technical Report ( paper )

9. ) LoRA Land: 310 个微调的 LLMs,可媲美 GPT-4,一份技术报告( 论文 )

Low Rank Adaptation (LoRA) has emerged as one of the most widely adopted methods for Parameter Efficient Fine-Tuning (PEFT) of Large Language Models (LLMs). LoRA reduces the number of trainable parameters and memory usage while achieving comparable performance to full fine-tuning. We aim to assess the viability of training and serving LLMs fine-tuned with LoRA in real-world applications. First, we measure the quality of LLMs fine-tuned with quantized low rank adapters across 10 base models and 31 tasks for a total of 310 models. We find that 4-bit LoRA fine-tuned models outperform base models by 34 points and GPT-4 by 10 points on average.

低秩适应 (Low Rank Adaptation, LoRA) 已成为大语言模型 (Large Language Models, LLM) 参数高效微调 (Parameter Efficient Fine-Tuning, PEFT) 中最广泛采用的方法之一 [1001]。LoRA 在减少可训练参数和内存使用的同时,能够实现与全量微调相当的性能。我们旨在评估使用 LoRA 微调的 LLM 在实际应用中的可行性。首先,我们测量了使用量化低秩适配器微调的 LLM 在 10 个基础模型和 31 个任务中的质量,总共评估了 310 个模型。我们发现,4-bit LoRA 微调模型平均比基础模型高出 34 分,比 GPT-4 高出 10 分。

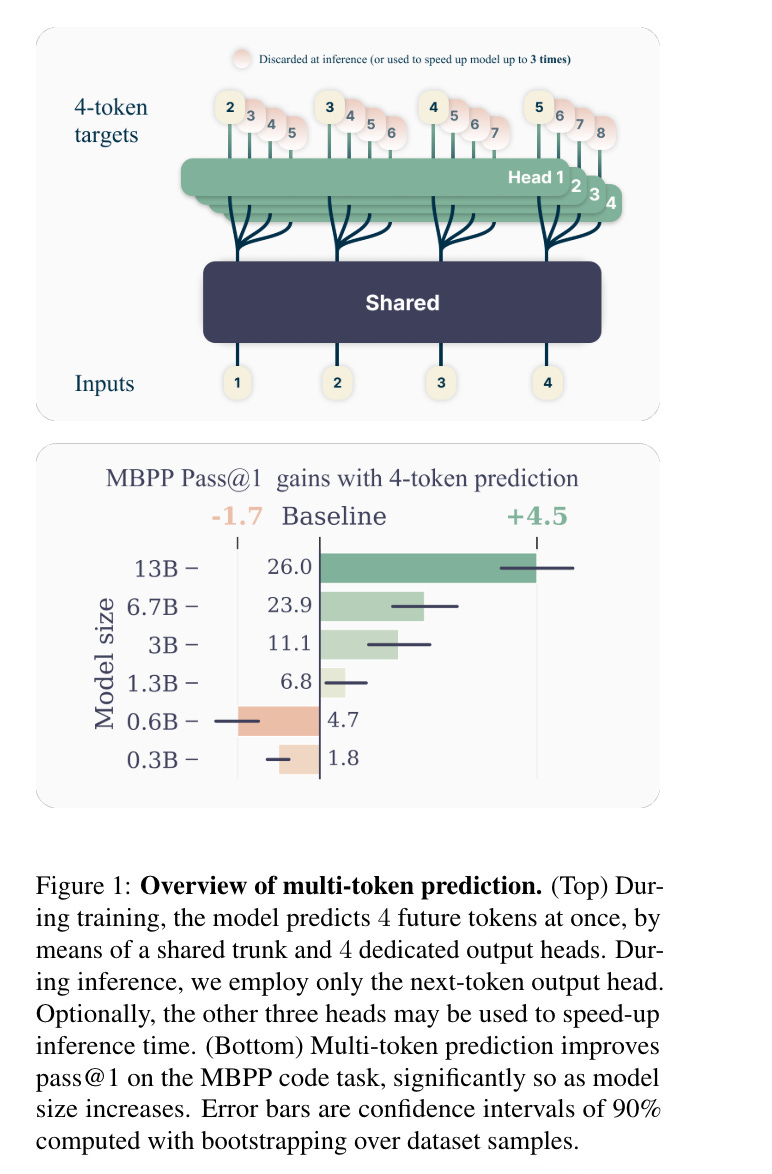

10.) Better & Faster Large Language Models via Multi-token Prediction ( paper )

10. ) 通过多 Token 预测实现更好更快的大语言模型(论文)

Large language models such as GPT and Llama are trained with a next-token prediction loss. In this work, we suggest that training language models to predict multiple future tokens at once results in higher sample efficiency. More specifically, at each position in the training corpus, we ask the model to predict the following n tokens using n independent output heads, operating on top of a shared model trunk. Considering multi-token prediction as an auxiliary training task, we measure improved downstream capabilities with no overhead in training time for both code and natural language models.

大语言模型(Large Language Model),如 GPT 和 Llama,是通过下一个 Token 预测损失进行训练的。在这项工作中,我们建议训练语言模型一次预测多个未来的 Token,这样可以提高样本效率。更具体地说,在训练语料库的每个位置,我们要求模型使用 n 个独立的输出头预测接下来的 n 个 Token,这些输出头在共享的模型主干上运行。将多 Token 预测视为辅助训练任务,我们测量了代码和自然语言模型在下游能力上的提升,而训练时间没有增加。

AIGC News of the week(APR 29 - MAY 5)

本周 AIGC 新闻(4 月 29 日 - 5 月 5 日)

1.) Llama-3 70B Instruct Gradient 1048K ( model )

Llama-3 70B Instruct Gradient 1048K(模型)

2.) llmc: Towards Accurate and Efficient LLM Compression ( repo )

2.) llmc: 迈向准确且高效的 LLM 压缩 ( repo )

3.) 6Img-to-3D: Few-Image Large-Scale Outdoor Driving Scene Reconstruction ( repo )

3. ) 6Img-to-3D: 少样本大规模户外驾驶场景重建( repo )

4.) STMC: Spatio-Temporal Motion Collage ( repo )

4.)STMC: 时空运动拼贴( repo )

5.) ConsistentID : Portrait Generation with Multimodal Fine-Grained Identity Preserving ( repo )

5. ) ConsistentID:使用多模态细粒度身份保持的肖像生成( repo )

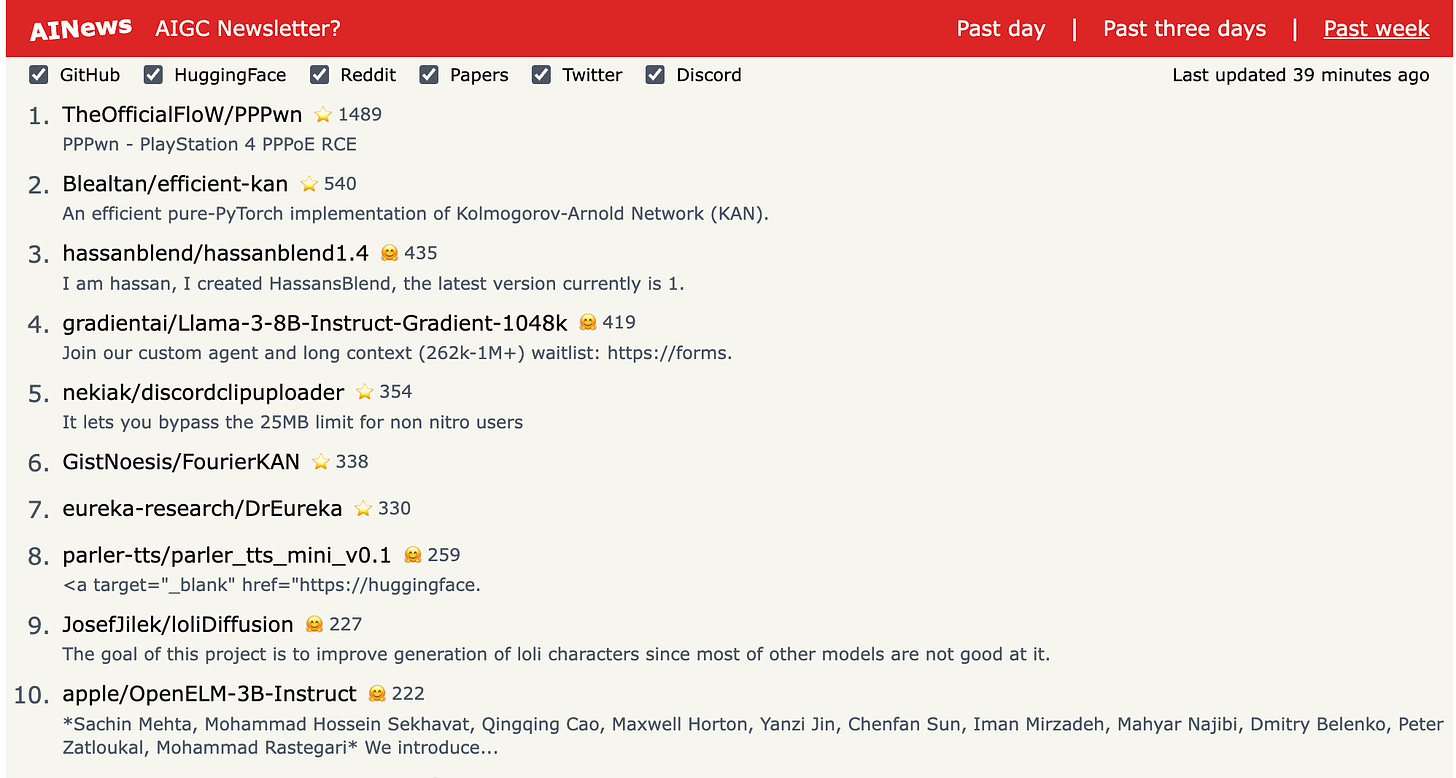

more AIGC News: AINews 更多 AIGC 新闻:AINews